How much control are you willing to give up to AI agents?

I've been thinking a lot about AI agents lately, and as it usually happens to me when AI is involved, many contrasting feelings bubble up.

On one side: excitement, potential, the pressure to "automate everything now or get left behind." On the other: unease, watching agents take actions I didn't authorize, feeling control (and mastery) slip through my fingers.

I've been swinging between the two quite a lot, and that's exactly where I started to think more carefully about how I want to approach agents, both for my own workflow and for the products I build.

Because there is one thing that I fear the most of all: the unexpected consequences that happen when ambition outpaces understanding.

Yep. Let that sink in, because that is what we are seeing more and more when we go head first into something and get hooked in the magic rabbit hole of AI.

Let me be clear from the beginning: the question here is not if we should use agents or not. That is going to happen either we want it, or we don't.

The question is: how much control are you (or your users) willing to give up? And for what in return?

As it often happens in product, the key to understand how to act is rooted in understanding users behavior, so there is where I started.

Design from behavior, not technology

For the past 10 years I've worked with digital marketplaces where people make life-defining decisions, like buying a home. In the Nordics, structural laws make speculation rare, which means that buying is personal: for yourself, for your family, not a commercial bet.

It is because of the highly emotional attachment to the purchase that the FOMO of not seeing all the options has been a thing for years, and so has the desire to feel in control of the process or to put your faith in an trusted expert. Might it be your real estate agent or your property portal.

That need for control won't disappear. When stakes are high and decisions are personal, people want to stay close. They want trust. They won't tolerate hallucinations or missed opportunities.

You might be thinking: no agents then. Well, not so fast.

I would definitely not discard agents as:

1- They already extremely important to be visible wherever your users are

2- They can be that "magic helping layer" that many users would happily use in their property journeys

The key is to understand which kind of agent is appropriate for your context. And that requires starting from how users actually behave before deciding how much autonomy to build in.

A way of thinking about agents: how much control are you willing to give up?

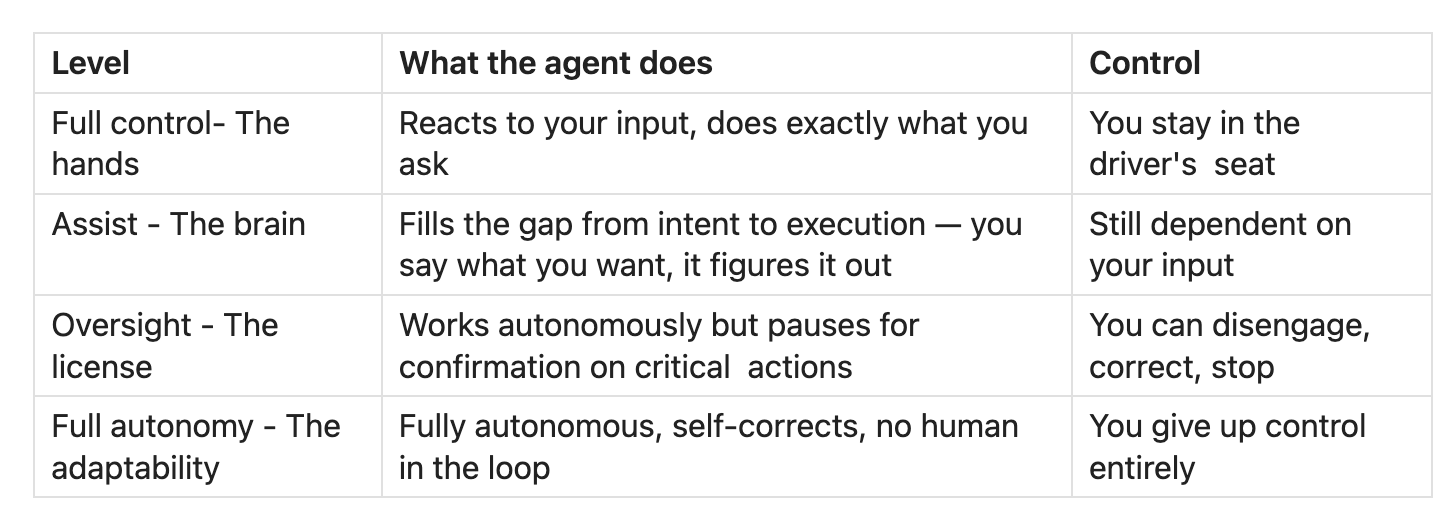

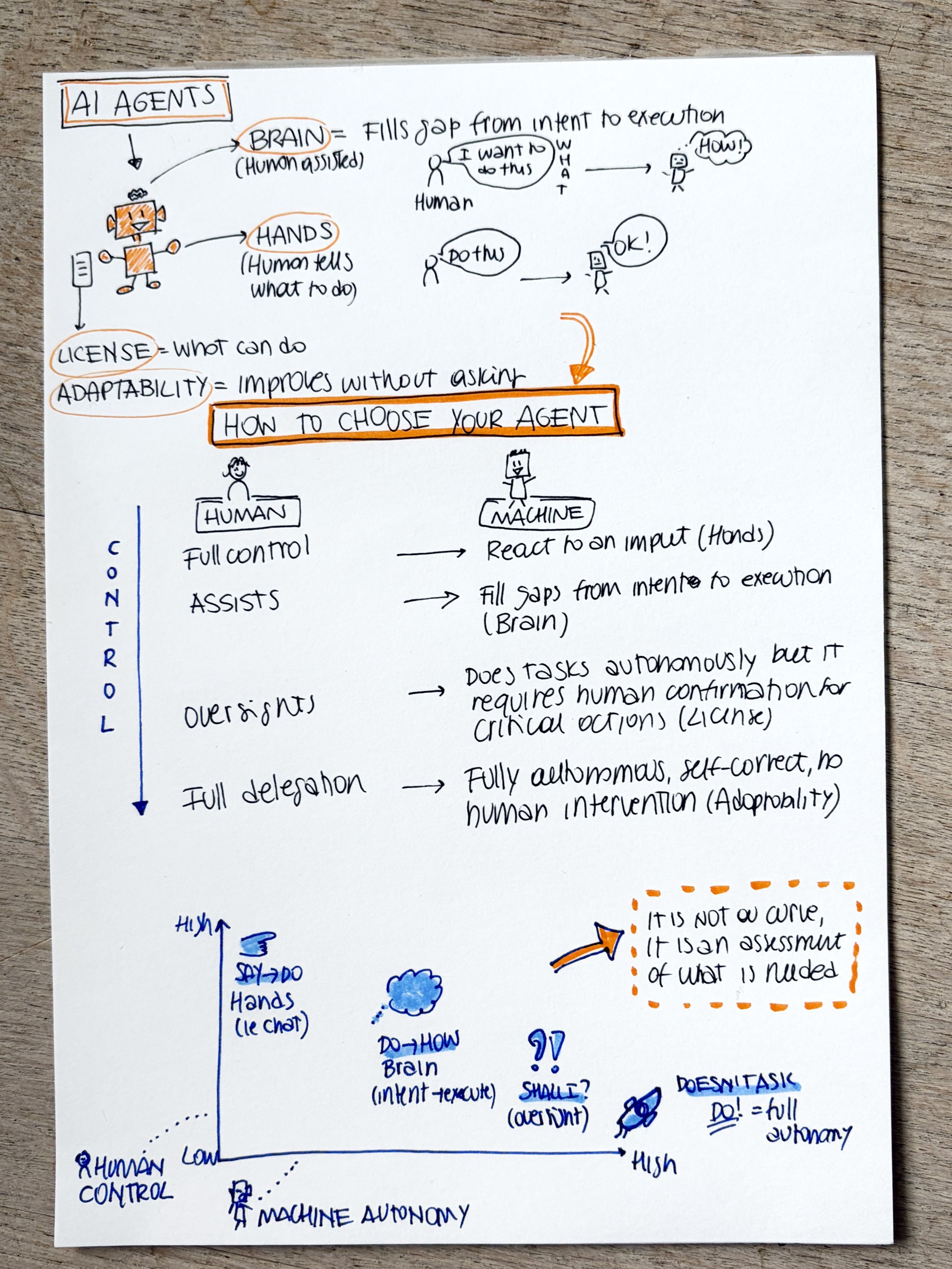

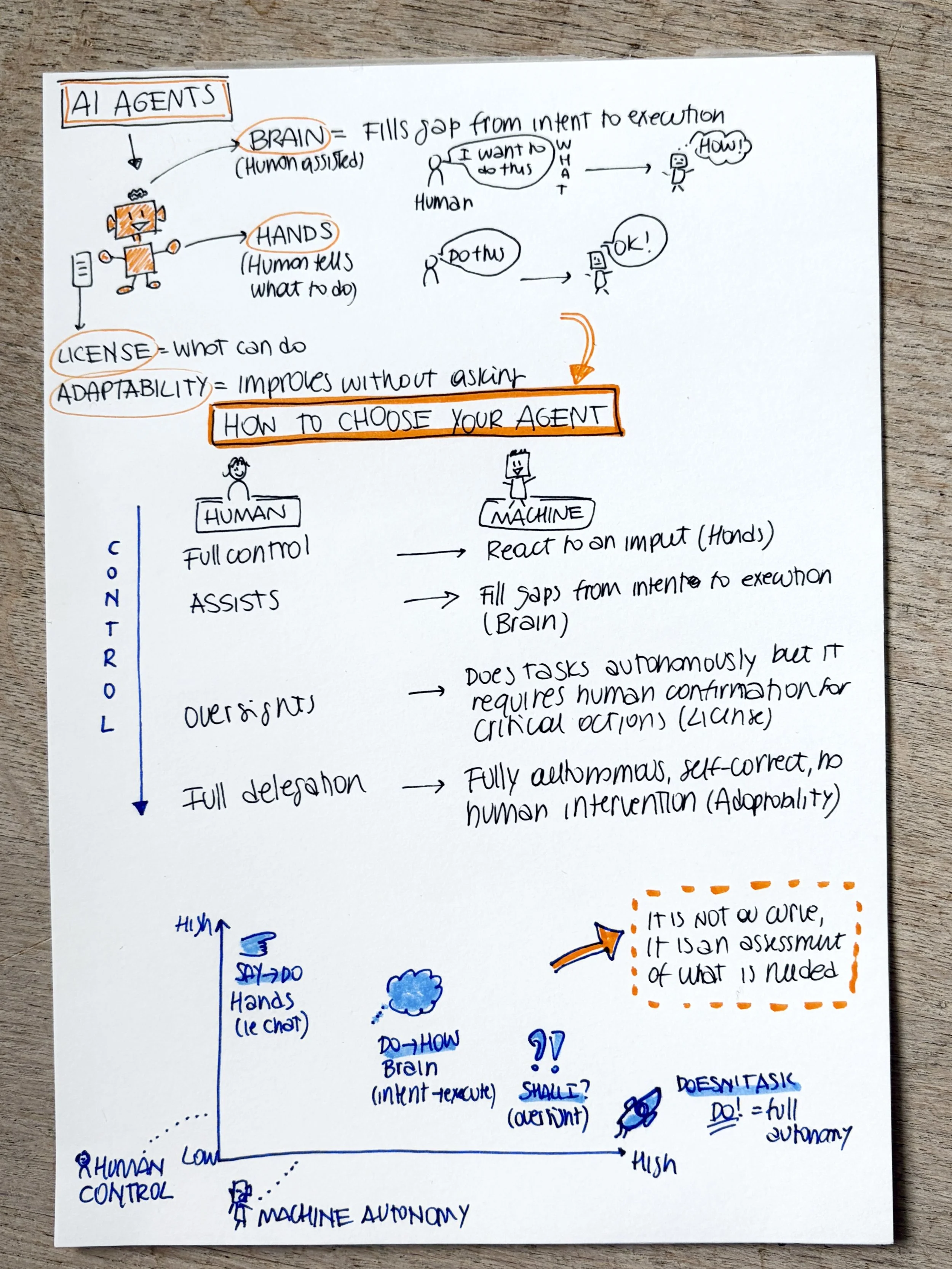

At my current company, Vend, I have the great luxury of being able to bounce ideas with AI experts, that we call "department of AI". Thanks to discussions with them I realized that not all agents are the same. From those discussions I created a simple mental model to think about agents and their connection to control.

I use it a lot for thinking about how to insert them in the products, as well as in my personal workflow.

Here is how my mental model goes:

One important realization I had was that the different levels are not a progression towards full delegation, it is an assessment on what is needed.

Your product or your flow is not necessarily better if it is fully autonomous. On the contrary, it can be much worse, for you or your users.

To take an example for my personal workflow. I could create agents that write for me and create content. I have enough "samples" for it to work on and recognize "my style". But do I want to fully delegate my writing, and my thinking? Hell, no!

When you totally delegate something, that muscle athrophise, and I surely do not want my writing or thinking skills to go down that path.

Just because you can delegate, it doesn't mean you should.

The right level depends on the stakes, your comfort, and how much trust has been built in the final result.

The design challenge: how do you create trust in autonomy?

So one part of the solution is thinking about the willingness to give up control, but that doesn't cover the full picture. There is another dimension to the whole agent conversation, which is about meeting people's expectations where they are, while creating that "wow" moment.

The more I think about it, the more I realized that for people outside of my "AI-focused product bubble" the AI experience is still at the chat or summary level — Google's AI overviews, customer service bots, some writing help. The "wow" moment that shifts behavior and builds deeper trust hasn't happened for most users yet.

What shifted mine was Claude Code. It knows my preferences, my style, my patterns but that's not why I trust it. I trust it because I can see exactly what it knows, edit it, shape it over time. And it's stored locally on my machine. The magic, for me, isn't the AI acting behind my back, it's the combination of transparency, my input, and the right level of autonomy working together.

Chat interfaces are great for collecting user input at scale, you can learn what users want in ways that weren't possible before. But building trust requires more. It requires showing users what the agent knows about them, letting them impact and improve the outcome, letting them stay in the loop at the level that fits the decision they need to make.

This matters not only for product design, but also for transparency and ethics that we should all take as grounding principles in our work.

Agents are powerful, but understanding users comes first.

The real question isn't which agent to adopt. It's: what behaviors apply to your users? What decisions are they making? How much control are they willing to give up, and what will the experience need to deliver in return?

Start from behavior, then choose the agent. And before you build anything: ask yourself what you're willing to give up, and whether your users would agree.